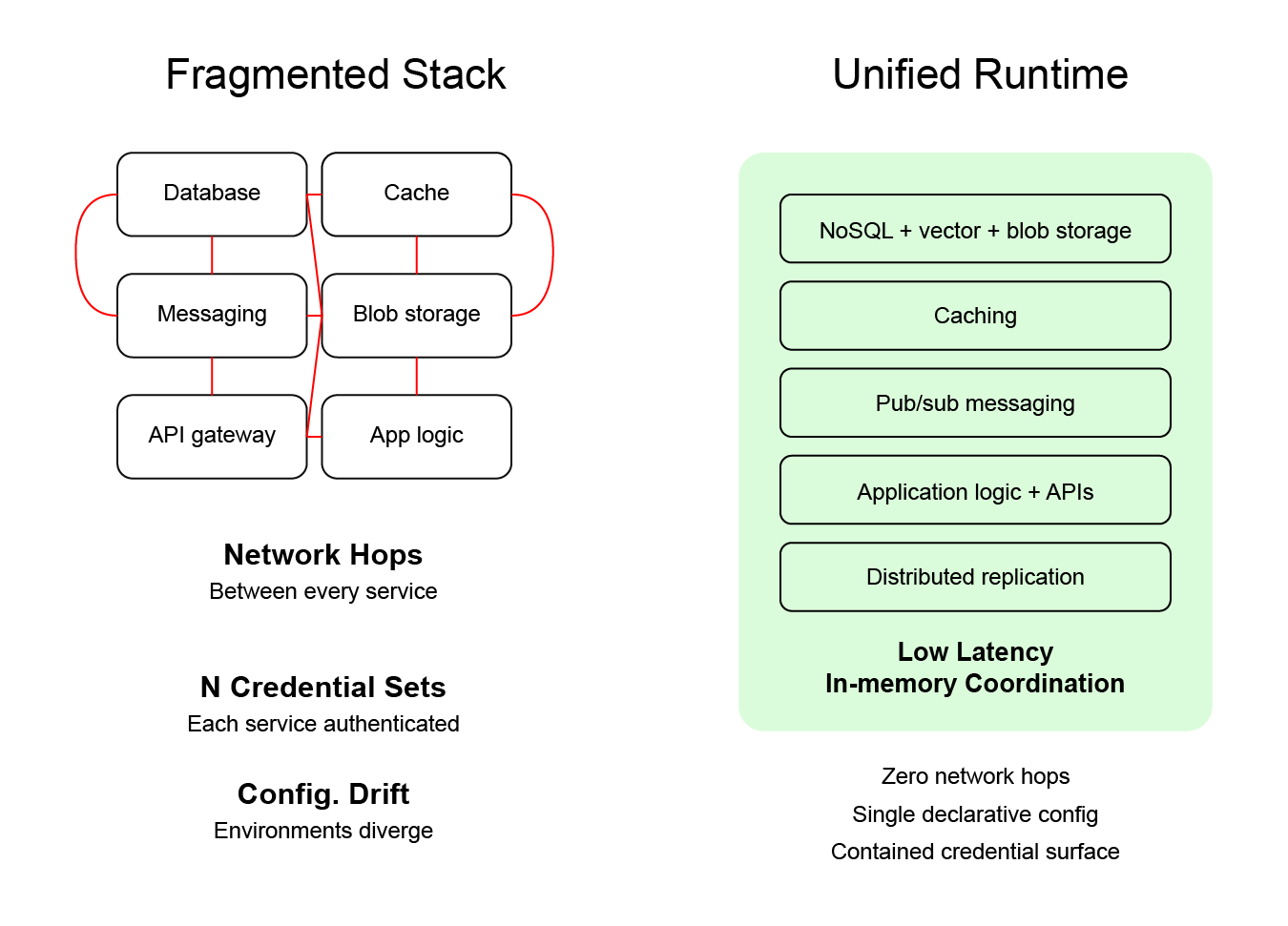

Modern application stacks are complicated by default. A typical architecture might include a database, cache, message broker, API layer, blob storage, and several operational services glued together across networks and environments. None of those components are inherently wrong. Each one solves a specific problem well. But taken together, they create a fragmented system that developers must constantly coordinate.

That fragmentation becomes even more problematic in the agentic era. AI systems are beginning to participate in the design, modification, and deployment of applications. Those systems work best when the environment they interact with is legible and structured. A scattered infrastructure landscape, where every capability lives in a different system, creates friction for both humans and AI.

A unified runtime is one architectural response to this problem.

At its simplest, a unified runtime collapses multiple layers of application infrastructure into a single runtime environment. Instead of database, cache, messaging, and application logic living in separate systems, they operate together within a single platform. That reduces the number of moving parts and removes many of the boundaries that introduce latency and operational complexity.

This approach also has an important security implication that becomes more visible in the agentic era. When infrastructure is fragmented across many services, any automated system participating in development must be granted access across that entire surface area. That is one of the core concerns organizations have about letting AI interact with production systems.

It turns out that a unified runtime reduces that exposure by collapsing much of the operational surface into a single, declarative environment that can be controlled more predictably.

We will come back to that security benefit later. For now, it helps explain why the unified runtime concept is gaining attention as software development becomes more AI-assisted.

The Problem With Fragmented Application Stacks

For years, modern architecture has encouraged teams to break systems into specialized components. Databases manage storage. Redis handles caching. Kafka or RabbitMQ manages messaging. Serverless functions handle logic. API gateways route traffic.

Each component can be excellent on its own. But every additional service introduces new coordination costs:

- Network hops between systems

- Serialization and deserialization of data

- Cross-service authentication

- Cache invalidation patterns

- Operational monitoring across multiple platforms

- Configuration drift across environments

The result is a stack that often works but is harder to reason about than it should be.

That complexity becomes more visible when teams attempt to automate parts of the development lifecycle. AI systems attempting to modify or generate production systems must navigate not only the code but the surrounding infrastructure relationships. Every external system becomes another boundary that must be understood.

A unified runtime changes the shape of that problem.

What a Unified Runtime Actually Means

A unified runtime is not simply bundling services together. It is an architectural model where the core infrastructure layers of an application operate within the same runtime environment.

In Harper’s implementation, the runtime combines:

- Data storage (NoSQL DB, Vector, and Blob Storage)

- Caching

- Messaging

- Application logic

- APIs

- Distributed Replication

into a single high-performance environment.

This design eliminates many of the coordination costs associated with traditional stacks. Data access, caching behavior, and application execution occur inside the same runtime rather than across multiple network boundaries.

From an engineering perspective, this removes several performance bottlenecks:

- Fewer network round trips

- Reduced serialization overhead

- Tighter coordination between compute and data

- Simpler cache consistency models

These benefits are not theoretical. They are structural outcomes of collapsing system boundaries.

A Performance-First Architecture

The unified runtime model is fundamentally a performance-first design.

When core infrastructure layers share the same runtime, latency drops because requests do not have to traverse multiple network services. Data access can occur directly in memory rather than across service boundaries. Coordination between components becomes significantly faster.

At the same time, Harper’s architecture scales horizontally across distributed environments. That means performance gains do not come at the cost of scalability. Instead, additional nodes increase throughput while simultaneously lowering average latency as nodes become more geographically distributed.

In practice, this combination creates two simultaneous advantages:

- Lower request latency

- Higher total system throughput

Many architectures optimize for one of those metrics at the expense of the other. The distributed unified runtime approach improves both by reducing internal communication overhead while allowing the system to scale across multiple geographically separated nodes.

This is why Harper often describes its runtime as both high-performance and distributed. The goal is not simply to run applications faster on one machine. The goal is to maintain fast response times while the system grows.

For applications where latency directly affects user experience—e-commerce, real-time data systems, IoT environments, or high-frequency APIs—those architectural choices matter.

The Security Implications in the Agentic Era

Earlier, we touched on the security challenges that arise when AI systems begin participating in development and operations.

The issue is not simply that AI might write code. The issue is that AI may need visibility into the infrastructure (and often data) to modify or deploy systems effectively.

In traditional stacks, that infrastructure is spread across many platforms:

- Cloud storage services

- Databases

- Caches

- Messaging systems

- Deployment tools

- Configuration environments

Granting automated systems access across that landscape can quickly become risky.

Kris Zyp’s article, The Security Problem in Agentic Engineering has an Architectural Solution describes how this fragmentation creates a new security challenge. AI systems must navigate multiple external services, each with its own permissions and credentials. The more fragmented the infrastructure, the larger the operational surface that must be exposed.

A unified runtime reduces that surface area.

Because core application infrastructure lives inside the same runtime environment, there are fewer external systems that must be accessed or coordinated. The application, its data structure, and much of its operational behavior are defined within a set of declarative files.

This does not eliminate all security concerns. No architecture does. But it changes the shape of the problem. Instead of protecting a web of loosely connected services, organizations can reason about a contained full-stack environment.

For enterprises exploring AI-assisted development, that containment becomes extremely valuable.

Why This Architecture Fits the Agentic Era

The agentic era introduces a new constraint on infrastructure design: systems must be understandable not only by humans but also by automated reasoning systems.

AI works best when the system it interacts with is legible. A runtime that exposes a declarative, full-stack environment is far easier for automated tools to interpret than a scattered collection of infrastructure services.

In practical terms, this means AI systems can:

- Understand application structure more clearly

- Propose changes more safely

- Reason about infrastructure relationships

- Generate production-ready modifications with less guesswork

This does not make the application itself simpler. Complex products will remain complex. But it reduces the accidental complexity of the platform underneath them.

That reduction is one reason unified runtimes are becoming more compelling as AI becomes part of the development process.

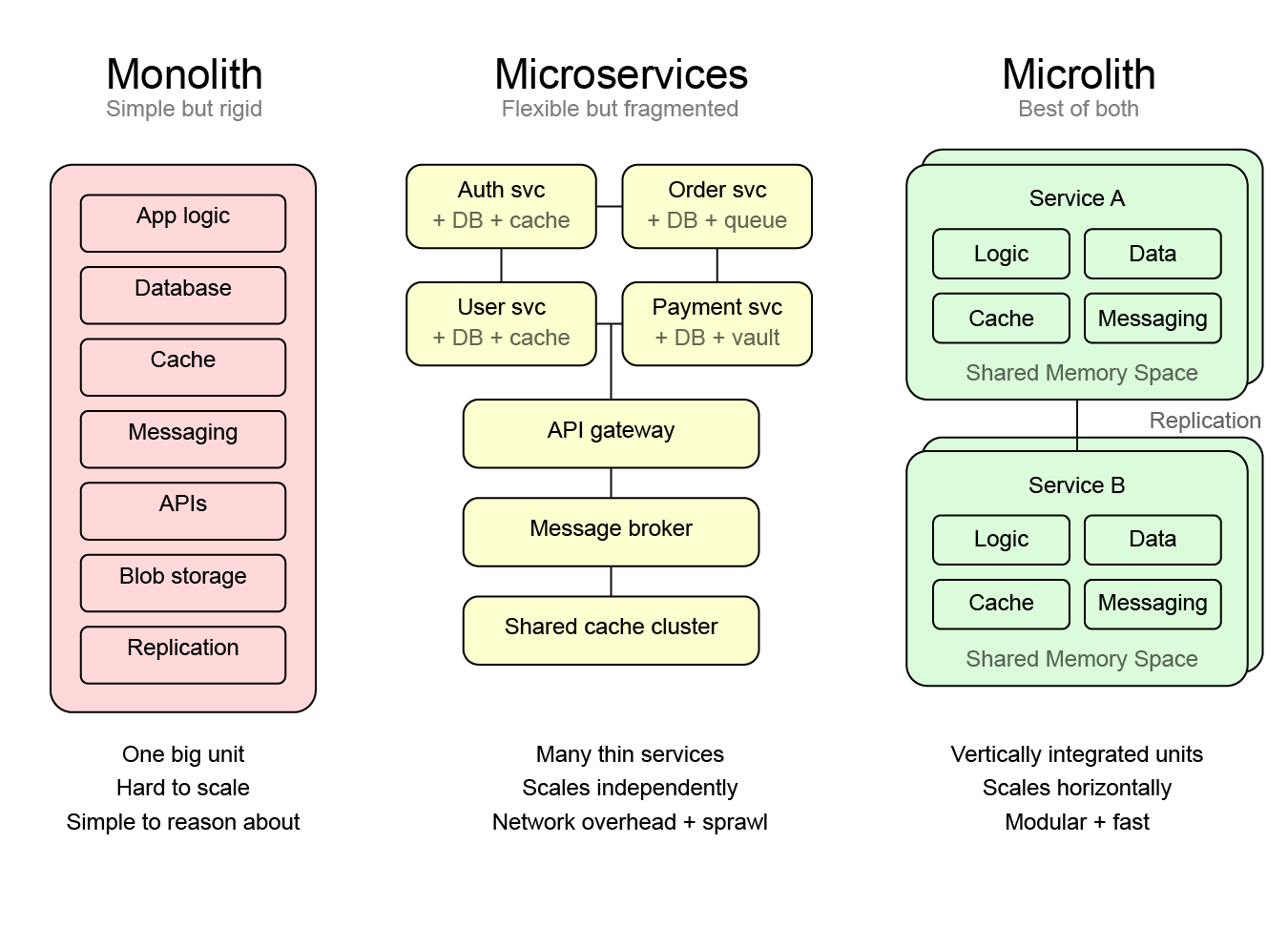

A Misconception Worth Acknowledging

When engineers hear “unified runtime,” the first concern is that it sounds like a return to the monolith. That concern is understandable, but it misses how the architecture is actually used.

A unified runtime does not mean putting an entire system into one giant application. In practice, systems are still split into logical services. The difference is that each service runs as a vertically integrated unit rather than as multiple thin layers within a larger microservice web.

A useful way to think about this is the microlith. If you want a deeper explanation, see

Performance and Simplicity with Distributed Microliths.

A microlith keeps the modular boundaries teams want while running application logic, data, and messaging inside the same runtime. That means components communicate in memory rather than via network APIs, which removes significant latency and coordination overhead.

You still deploy multiple services. You still scale horizontally. The difference is that each service is a coherent runtime rather than a collection of loosely connected infrastructure pieces.

The result is a system that keeps the modularity of microservices while avoiding the performance and operational penalties that microservice sprawl often introduces.

The Bigger Shift

The most important change happening right now is not the rise of agents themselves.

It is the shift in how software gets built.

More systems will involve AI in development. More infrastructure will need to be understandable to automated tools. And more applications will be expected to move from idea to production faster than before.

A unified runtime supports that shift by removing unnecessary fragmentation from the platform layer.

Instead of stitching together a dozen services and hoping they behave well together, teams can work within a runtime designed to support the full lifecycle of an application.

The application remains the product.

The runtime simply makes it easier to build.

.webp)